Recent government directives, international conferences, and media headlines reflect growing concern that artificial intelligence could exacerbate biological threats. When it comes to biorisk, AI tools are cited as enablers that lower information barriers, enhance novel biothreat design, or otherwise increase a malicious actor’s capabilities.

It is important to evaluate AI’s impact within the existing biorisk landscape to assess the relationship between AI-agnostic and AI-enhanced risks. While AI can alter the potential for biological misuse, focusing attention solely on AI may detract from existing, foundational biosecurity gaps that could be addressed with more comprehensive oversight.

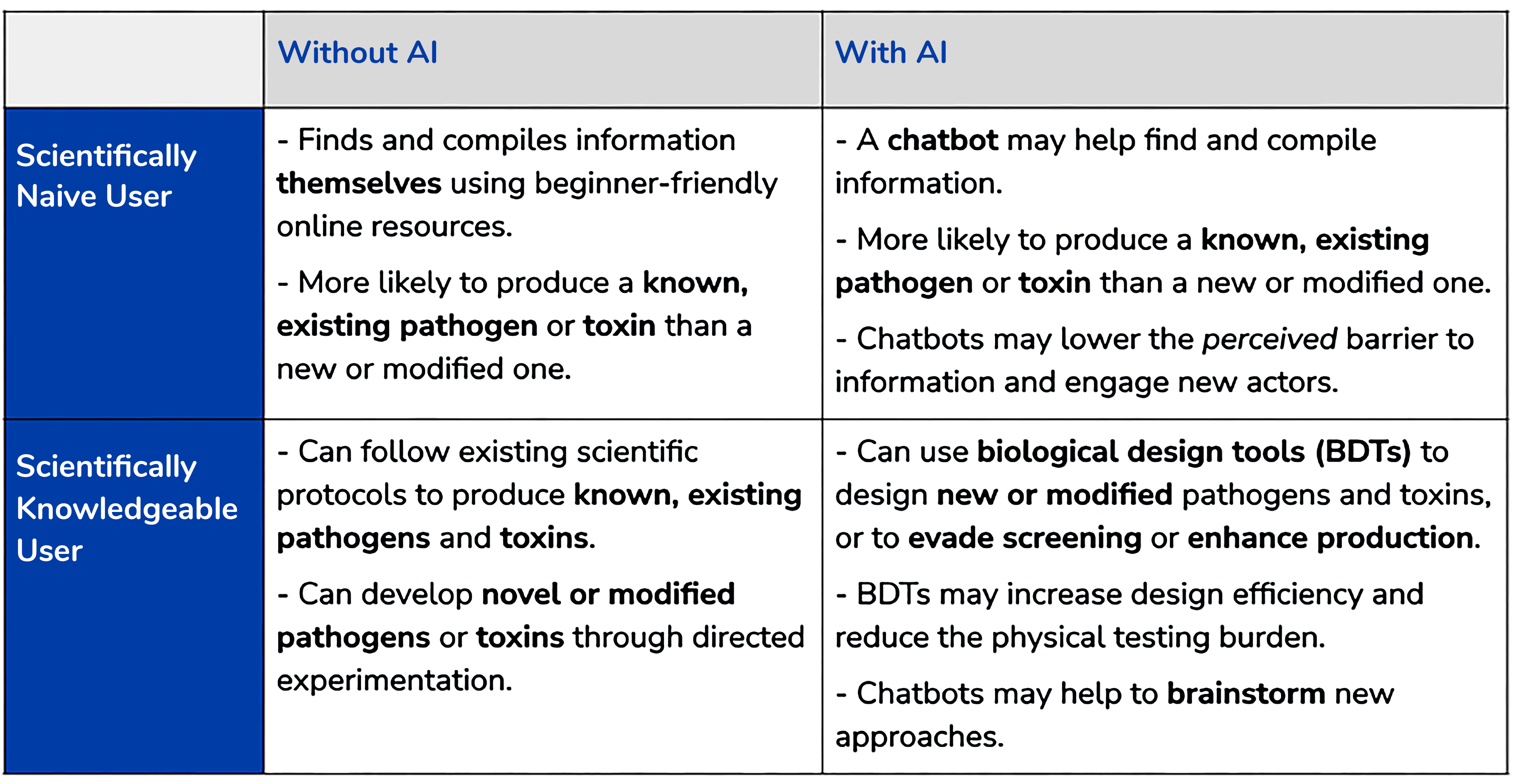

Policies that effectively mitigate biorisks will also need to account for the varied risk landscape, because safeguards that work in one case are unlikely to be effective for all actors and scenarios. In this explainer, we outline the AI-agnostic and AI-enhanced biorisk landscape to inform targeted policies that mitigate real scenarios of risk without overly inhibiting AI’s potential to accelerate cutting-edge biotechnology.

Our Key Takeaways regarding AI and biorisk are:

- Biorisk is already possible without AI, even for non-experts. AI tools are not needed to access the foundational information and resources to cause biological harm. This highlights the need for layered safeguards throughout the process, from monitoring certain physical materials to bolstering biosafety and biosecurity training for researchers. The recent Executive Order on AI’s requirement to screen DNA synthesis for federally-funded research is an example of a barrier to material acquisition.

- The biorisk landscape is not uniform, and specific scenarios and actors should be assessed individually. Distinct combinations of users and AI tools impact the potential for harm and the most effective likely policy solutions. Future strategies should identify clearly defined scenarios of concern and design policies to target them.

- Existing policies regarding biosecurity and biosafety oversight need to be clarified and strengthened. AI-enabled biological designs are digital predictions that do not cause physical harm until they are produced in the real world. Such gain-of-function research, which modifies pathogens to be more dangerous, is already the target of existing policies. However, these policies do not adequately define what characteristics constitute risky research of concern, making them difficult to interpret and implement. These policies are currently under review, and could be strengthened by establishing a standard framework of acceptable and unacceptable risk applicable to both AI-enhanced and AI-agnostic biological experimentation.